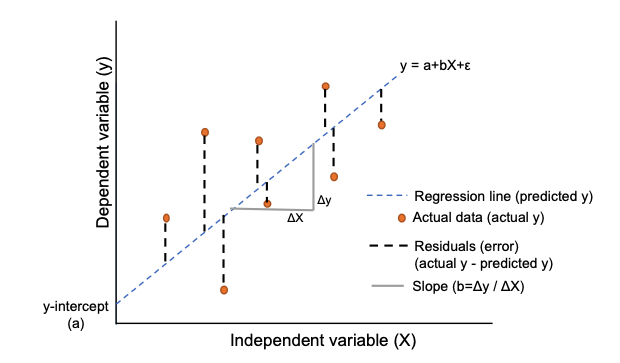

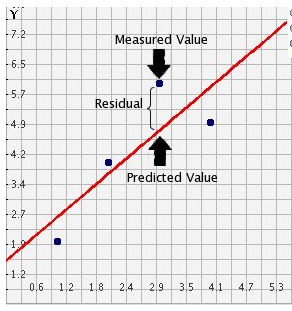

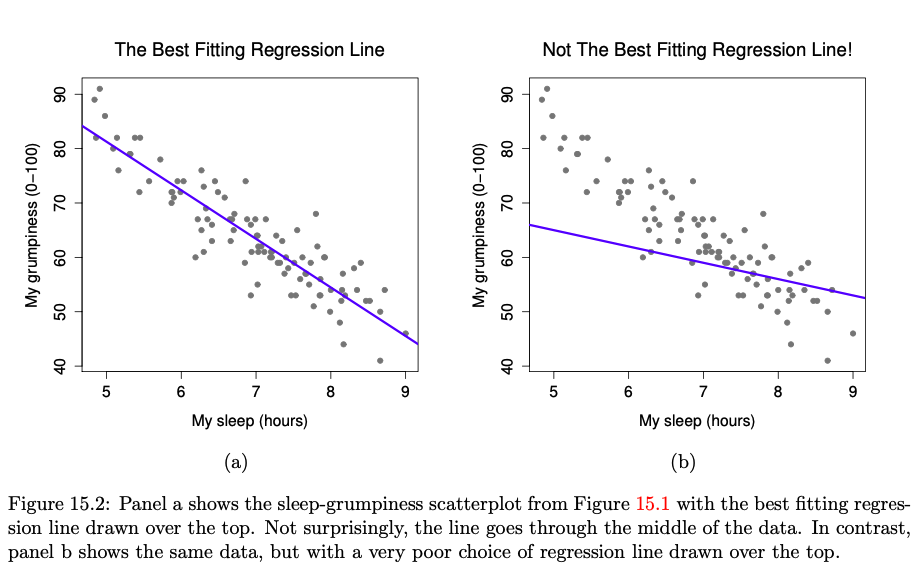

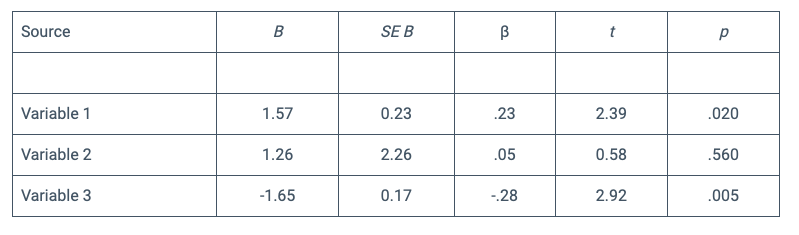

class: center, middle, inverse, title-slide # Regression analysis - linear regression ## ⚔<br/>with xaringan ### Goran Kardum ### Department of Psychology ### 2022-05-16 --- ``` ## Loading required namespace: bibtex ``` --- How to predict value between variables in the research? --- # Regression analysis - any of several statistical techniques that are used to describe, explain, or predict (or all three) the variance of an outcome or dependent variable using scores on one or more predictor or independent variables (APA dictionary, 2022). -- - Regression analysis is a subset of the general linear model (GLM). It yields a regression equation as well as an index of the relationship between the dependent and independent variables (APA dictionary, 2022). -- - For example, a regression analysis could show the extent to which 1st-year grades in college (outcome) are predicted by such factors as standardized test scores, courses taken in high school, letters of recommendation, and particular extracurricular activities (APA dictionary, 2022). --- ## Types of regression analysis - Linear regression -- - Logistic regression -- - Multinomial Logistic Regression -- - Ordinal Logistic Regression --- ## Types of regression analysis - Simple and Multiple regression analysis -- - Linear and non-linear regression analysis -- - Hierarchical regression (compare the two models in hypothesis testing) --- ## Linear Regression - Linear Regression is one of the most widely used regression techniques to model the relationship between two variables. -- - analysis of the relationship between interval scale predictors and interval scale outcomes -- - It uses a linear relationship to model the regression line. There are 2 variables used in the linear relationship equation i.e., predictor variable and response variable. -- - The regression line created using linear calculation behind that a straight line. The response variable is derived from predictor variables. Predictor variables are estimated using some research design and experiments. --- ## y = ax + b y is the response variable (dependent variable, criteria), -- x is the predictor variable (independent variable), -- a - represents the slope of the line and that value determines how much the variable Y will change when X changes by one unit. -- b - represents the y intercept of the line and that value identifies the value of Y when X is zero. b=r*sy/sx. This is not an independent variable but rather our estimate of the dependent variable when all predictors in the model are set to 0. --- ## Regression line  --- # Residuals - residuals = actual y - predicted y -- - The sum and mean of residuals is always equal to zero. Why? Because the regression line is in the middle, optimally going through the regression cloud  --- ## The best vs not the best fitting regression line (Navarro, 2019)  --- ## Regression analysis steps 1. What the author of regression analysis is trying to do? Knowing what the variables are and how they are distributed have great implications for how reading the regression analysis. Descriptive statistics and plotting the data must be done before RA! Try to check aim of the study, research design and of course descriptive statistics with plotting. -- 2. Remove outliers because that allows you to see how robust your model is: if the error for the outliers is low, then it’s a win for the model; if the error is still high, then they are still outliers. -- 3. Remove all NA values of the dependent variable: with function drop_na() in tidyverse. -- 4. normalize the data - that is the best way to compare coefficients of independent variables. If not - coefficients ranging widely -- 5. Understanding and interpretation the regression table values -- 5. p-value significance is an indicator of certainty. If a coefficient is high and if it is not statistically significant - problem for model interpretation. --- ## Assumptions of regression - Normality -- - Linearity -- - Homogeneity of variance -- - Uncorrelated predictors -- - Residuals are independent of each other -- - No bad outliers --- ## R model syntax - y ~ x main effect of x on y, y predicted by x -- - y ~ x + z main effects of x and z on y, y predicted by both x and z -- - y ~ x:z interaction of x and z on y, y predicted by the interaction of x and z -- - y ~ x + z + x:z main and interaction effects of x and z on y -- - next slide: simple example in R --- ```r library(car) ``` ``` ## Loading required package: carData ``` ```r lm_car <- lm(dist ~ speed, data=cars) summary(lm_car) ``` ``` ## ## Call: ## lm(formula = dist ~ speed, data = cars) ## ## Residuals: ## Min 1Q Median 3Q Max ## -29.069 -9.525 -2.272 9.215 43.201 ## ## Coefficients: ## Estimate Std. Error t value Pr(>|t|) ## (Intercept) -17.5791 6.7584 -2.601 0.0123 * ## speed 3.9324 0.4155 9.464 1.49e-12 *** ## --- ## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1 ## ## Residual standard error: 15.38 on 48 degrees of freedom ## Multiple R-squared: 0.6511, Adjusted R-squared: 0.6438 ## F-statistic: 89.57 on 1 and 48 DF, p-value: 1.49e-12 ``` --- ## Read regression analysis output - Estimate value: regression coefficient provides the expected change in the dependent variable for a one-unit increase in the independent variable. It could be positive or negative (As the independent variable increases, the dependent variable decreases). -- - Standard error: For each independent variable, we expect to be wrong in our predictions and our estimate of the standard deviation of the coefficient. --- ## Coefficient of determination - proportion of the variance in the outcome variable that can be accounted for by the predictor -- - R squared = 1 - SSres/SStot -- - Total sum of squares and sum of squares of residuals --- ## Symbols Used (APA-Style Regression Table)  --- ## Interpretation symbols from APA stlyle table of RA - The first symbol is the unstandardized beta (B). This value represents the slope of the line between the predictor variable and the dependent variable. For every one unit increase in variable 1, the dependent variable increases by appropriate units. - The next symbol is the standard error for the unstandardized beta (SE B). This value is similar to the standard deviation for a mean. The larger the number, the more spread out the points are from the regression line. The more spread out the numbers are, the less likely that significance will be found. - The third symbol is the standardized beta (β). This works very similarly to a correlation coefficient. It will range from 0 to 1 or 0 to -1, depending on the direction of the relationship. The closer the value is to 1 or -1, the stronger the relationship. - The fourth symbol is the ttest statistic (t). This is the test statistic calculated for the individual predictor variable. This is used to calculate the p value. This tells whether or not an individual variable significantly predicts the dependent variable. --- # Logistic Regression - Logistic regression is presented as the statistical method of choice for analyzing the effects of independent variables on a binary dependent variable in terms of the probability of being in one of its two categories vs the other. -- - The dependent variable could be some kind of treatment: treatment with behaviourally-oriented psychotherapy vs treatment with psychoanalytically-oriented psychotherapy, and the independent variables are several patient and clinician characteristics. -- - Like ordinary multiple regression, the method is shown capable of analyzing categorical as well as continuous independent variables. Unlike ordinary multiple regression when applied to binary data, logistic regression analysis necessarily yields estimated probabilities that lie between 0 and 1. -- - The measure of association derived from logistic regression analysis - the odds ratio. -- - Logistic regression is one example of the generalized linear model (glm). --- ## APA dictionary (https://dictionary.apa.org/) a form of regression analysis used when the outcome or dependent variable may assume only one of two categorical values (e.g., pass or fail) and the predictors or independent variables are either categorical or continuous. For example, a researcher could use logistic regression to determine the likelihood of graduating from college (yes or no) given such student information as high school grade point average, college admissions test score, number of advanced placement courses taken in high school, socioeconomic status, and gender. --- --- # References - psych package (http://personality-project.org/r/psych/) -- - CRAN Task View: Teaching Statistics (https://cran.r-project.org/web/views/TeachingStatistics.html) -- - Barret Schloerke, JJ Allaire and Barbara Borges (2020). learnr: Interactive Tutorials for R. R package version 0.10.1. https://CRAN.R-project.org/package=learnr -- - Navarro, D. (2019). Learning statistics with R: A tutorial for psychology students and other beginners. University of New South Wales: Australia. --- class: center, middle # Thanks! Slides created via the R package [**xaringan**](https://github.com/yihui/xaringan). The chakra comes from [remark.js](https://remarkjs.com), [**knitr**](https://yihui.org/knitr/), and [R Markdown](https://rmarkdown.rstudio.com).