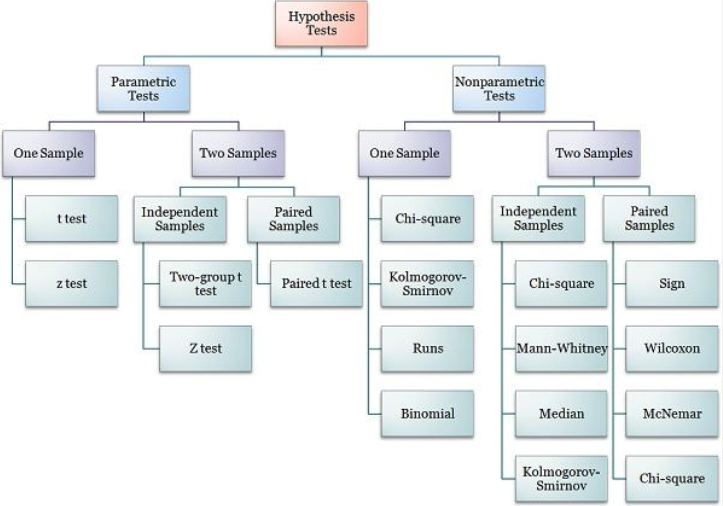

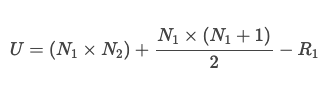

class: center, middle, inverse, title-slide # Nonparametric statistics ## ⚔<br/>with xaringan ### Goran Kardum ### Department of Psychology ### 2022-06-04 --- ``` ## Loading required namespace: bibtex ``` --- How to deal with statistical procedure without normal distribution and knowing population? --- # Definitions - Non-parametric (or distribution-free) tests do not assume that the distributions being compared are normal - statistica tool alternatives where the assumptions of normality do not hold. -- - They are called “non-parametric tests” - do not estimate parameters for a model using a normal distribution. -- - The traditional nonparametric are primarily rank-based tests. Instead of using the numeric values of the dependent variable, the dependent variable is converted into relative ranks. --- ## Conversion to ranks ```r # Example from similar code rcompanion.org (S. Mangiafico) Height = c(158, 165, 169, 164, 173, 173, 179, 190) names(Height) = letters[1:8] Height ``` ``` ## a b c d e f g h ## 158 165 169 164 173 173 179 190 ``` ```r rank(Height) ``` ``` ## a b c d e f g h ## 1.0 3.0 4.0 2.0 5.5 5.5 7.0 8.0 ``` -- Procedure for some non-parametric tests based on chi square test: Non-parametric tests determine the value of data points by assigning + or - signs, based upon the ranking of data. The analysis process involves numerically ordering data and identifying its ranking number. Data is assigned a ‘+’ if it is greater than the reference value (where the value is expected/hypothesised to fall) and a ‘-’ if it is lower than the reference value. This ranked data is then used as data points for a non-parametric statistical analysis. --- ## When? - When data is nominal. Data is nominal when it is assigned to groups, these groups are distinct and have limited similarities (e.g. responses to ‘What is your ethnicity?’). -- - When data is ordinal. That is when data has a set order or scale (e.g. ‘Rate your anger from 1-10’.) -- - When there have been outliers identified in the data set. -- - When data was collected from a small sample. --- ## Non-parametric tests share these traits: - Researchers use them less than they should (they aren’t as well-known). -- - They’re applicable to hierarchic data. -- - You can use them when two series of observations come from distinct populations (populations in which the variable isn’t equally distributed). -- - They’re the only realistic alternative when the sample size is small. --- ## used when the following criteria can be assumed: - At least one violation of parametric tests assumptions. E.g., data should have similar homoscedasticity of variance: the amount of ‘noise’ (potential experimental errors) should be similar in each variable and between groups. -- - Non-normal distribution of data. In other words, data is likely skewed. -- - Randomness: data should be taken from a random sample from the target population. -- - Independence: the data from each participant in each variable should not be correlated. This means that measurements from a participant should not be influenced or associated with other participants. --- ## Advantages of non-parametric tests Research using non-parametric tests has many advantages: -- - Statistical analysis uses computations based on signs or ranks. Thus, outliers in the data set are unlikely to affect the analysis. -- - They are appropriate to use even when the research sample size is small. -- - They are less restrictive than parametric tests as they don't have to meet as many criteria or assumptions. Therefore, they can be applied to data in various situations. -- - They have more statistical power than parametric tests when the assumptions of parametric tests have been violated. This is because they use the median to measure the central tendency rather than the mean. Outliers are less likely to affect the median. -- - Many non-parametric tests have been a standard in psychology research for many years: the chi-square test, the Fisher exact probability test, and the Spearman’s correlation test. --- ## Advantages of nonparametric tests - Most of the traditional nonparametric tests presented in this section are relatively common. -- - They are appropriate for interval/ratio or, often, ordinal dependent variables. -- - Their nonparametric nature makes them appropriate for data that don’t meet the assumptions of parametric analyses. These include data that are skewed, non-normal, contain outliers, or, possibly, are censored. (Censored data is data where there is an upper or lower limit to values. For example, if ages under 5 are reported as “under 5”.) --- ## Disadvantages of non-parametric tests Non-parametric tests also have disadvantages that we should consider: -- - The mean is considered the best and a standard measure of central tendency because it uses all the data points within the data set for analysis. If data values change, then the mean calculated will also change. However, this is not always the case when calculating the median. -- - As these tests don’t tend to be vastly affected by outliers, there is an increased likelihood of research carrying out a Type 1 error (essentially a ‘false positive', rejecting the null hypothesis when it should be accepted). This reduces the validity of the findings. -- - Non-parametric tests are considered as appropriate for hypothesis testing only, as they do not calculate or estimate effect sizes (a quantitative value that tells you how much two variables are related) or confidence intervals. This means that researchers cannot identify how much the independent variable affects the dependent variable and how significant these findings are. Therefore, the utility of the findings is limited and its **validity** is also difficult to establish. --- ## Disadvantages of nonparametric tests - These tests are typically named after their authors, with names like Mann–Whitney, Kruskal–Wallis, and Wilcoxon signed-rank. It may be difficult to remember these names, or to remember which test is used in which situation. -- - Most of the traditional nonparametric tests presented here are limited by the types of experimental designs they can address. They are typically limited to a one-way comparison of independent groups (e.g. Kruskal–Wallis), or to unreplicated complete block design for paired samples (e.g. Friedman). -- - There may be more flexible approaches that can cover more complex designs. The aligned ranks transformation is one nonparametric approach. Ordinal regression is appropriate when there is an ordinal dependent variable. Permutation tests may be applicable in some cases. -- - Readers are likely to find a lot of contradictory information in different sources about the hypotheses and assumptions of these tests. In particular, authors will often treat the hypotheses of some tests as corresponding to tests of medians, and then list the assumptions of the test as corresponding to these hypotheses. However, if this is not explicitly explained, the result is that different sources list different assumptions that the underlying populations must meet in order for the test to be valid. This creates unnecessary confusion in the mind of students trying to correctly employ these tests. --- ## Interpretation of nonparametric tests - In general, these tests determine if there is a systematic difference among groups. This may be due to a difference in location (e.g. median) or in the shape or spread of the distribution of the data. With the Mann–Whitney and Kruskal–Wallis tests, the difference among groups that is of interest is the probability of an observation from one group being larger than an observation from another group. If this probability is 0.50, this is termed “stochastic equality”, and when this probability is far from 0.50, it is sometimes called “stochastic dominance”. -- Optional technical note: Without additional assumptions about the distribution of the data, the Mann–Whitney and Kruskal–Wallis tests do not test hypotheses about the group medians. Mangiafico (2015) and McDonald (2014) in the “References” section provide an example of a significant Kruskal–Wallis test where the groups have identical medians, but differ in their stochastic dominance. --- ## Clasification of non-parametric tests - Non-parametric tests of one sample -- - Non-parametric tests for two related samples -- - Non-parametric tests for related K-samples -- - Non-parametric tests for two independent samples -- - Non-parametric tests for independent K-samples -- - Permutation tests --- ## Non-parametric tests of one sample Pearson’s chi-squared test This test is useful when a researcher wants to analyze the relationship between two quantitative variables. It’s also helpful to evaluate to what extent the collected data in a categorical variable (empirical distribution) adjusts or not (is similar or not) to a determined theoretical distribution (uniform, binomial, multinomial, etc.). -- Binomial test This test allows the tester to find out if a dichotomic variable follows or doesn’t follow a determined probability model. As a result, it makes it possible to contrast the hypothesis that the observed proportion of correct answers adjusts to the theoretical proportion in a binomial distribution. -- Runs test This is a test that allows the researcher to determine if the number of runs (R) observed in a sample of size (n) is big enough or small enough to be able to reject the independence (or randomness) hypothesis among the observations (The **Wald–Wolfowitz runs test**, named after statisticians Abraham Wald and Jacob Wolfowitz is a non-parametric statistical test that checks a randomness hypothesis for a two-valued data sequence). -- Kolmogorov-Smirnov (K-S) test This test is useful for contrasting the null hypothesis that the distribution of a variable adjusts to a determined theoretical probability distribution (normal, exponential, or Poisson). That the distribution of data points adjust or not to a determined distribution helps determine which data analysis techniques are best for the specific situation. --- ## Non-parametric tests for two related samples - McNemar test Statisticians use the McNemar test to contrast hypotheses about the equality of proportions. It’s useful when there’s a situation in which the measures of each subject repeat themselves (the data returns the answer of each one of those twice: once before and once after a specific event). -- - Sign Test This allows researchers to contrast the equality hypothesis between two population medians. They can also use it to find out if one variable tends to be greater than another. It’s also useful for testing the trends that follow a series of positive variables. -- - Wilcoxon Test The Wilcoxon test makes it possible to contrast the equality hypothesis between two population medians. --- ## Non-parametric tests for related K-samples - Friedman test This is an extension of the Wilcoxon test. Researchers use it to include registered data from more than two periods of time or groups of three or more subjects. In these tests, one subject from each group has been randomly assigned to one of the three or more conditions. -- - Cochran test This is identical to the last, but it applies when all the answers are binary. Cochran’s Q also approves the hypothesis that dichotomous variables that are related among themselves have the same average. -- - Kendall’s W This test has the same instructions as the Friedman test. Its use for research, however, has been primarily to find out the concordance between ranges. --- ## Non-parametric tests for two independent samples - Mann-Whitney U This is equivalent to the Wilcoxon range sum test and also the Kruskal-Wallis two-group test. -- - Kolmogorov-Smirnov Test Researchers use this test to contrast the hypothesis that two samples come from the same population. -- - Wald-Wolfowitz Run test This contrasts if two samples with independent data come from populations with the same distribution. -- - Moses test of extreme reaction This tests to see if there are differences in the degree of dispersion or variability in two distributions. It focuses on the distribution of the control group. It’s also a measure to find out how many extreme values of the experimental group influence the distribution when combined with the control group. --- ## Non-parametric tests for independent K-samples - Median tests These tests contrast differences between two or more groups in relation to the median. It’s similar to the Chi-squared test. -- Jonckheere-Terpstra test This is the most effective test if you want to analyze the ascending or descending order of K populations from which the sample was drawn. -- Kruskal-Wallis H tests Lastly, the Kruskal-Wallis H test is an extension of the Mann-Whitney U test and represents an excellent alternative to the ANOVA test of a factor. --- ## Classification  --- ## Wilcoxon rank sum test - The ‘Wilcoxon Rank Sum test’ (also called ‘Mann-Whitney test’), is a alternative to the t-test. Mann-Whitney U test is equivalent to a two-sample Wilcoxon rank-sum test. The Mann–Whitney U test can be considered equivalent to the Kruskal–Wallis test with only two groups. -- - To test the hypothesis that the distributions in the two groups have the same median. -- - Dependent variable is ordinal, interval, or ratio -- - Independent variable is a factor with two levels. That is, two groups -- - Observations between groups are independent. That is, not paired or repeated measures data -- - The distributions of values for each group need to be of similar shape and spread. Otherwise, the test is typically a test of stochastic equality. --- ## Appropriate data (Mann-Whitney U test) - Two-sample data. That is, one-way data with two groups only -- - Dependent variable is ordinal, interval, or ratio -- - Independent variable is a factor with two levels. That is, two groups -- - Observations between groups are independent. That is, not paired or repeated measures data -- - In order to be a test of medians, the distributions of values for each group need to be of similar shape and spread. Otherwise, the test is typically a test of stochastic equality. --- ## Methodology of M-W U test - state the null and alternative hypotheses. -- - rank all of the scores (from both groups) from the smallest to largest. Equal scores are allocated the average of the ranks they would have if there was tiny differences between them. -- - Next we sum the ranks for each group. You call the sum of the ranks for the larger group R1 and for the smaller sized group, R2. If both groups are equally sized then we can label them whichever way round we like. --  --- - Two-sample Mann-Whitney U test example with rcompanion package (Mangiafico, 2022) -- ```r Data = read.table(header=TRUE, stringsAsFactors=TRUE, text=" Speaker Likert Pooh 3 Pooh 5 Pooh 4 Pooh 4 Pooh 4 Pooh 4 Pooh 4 Pooh 4 Pooh 5 Pooh 5 Piglet 2 Piglet 4 Piglet 2 Piglet 2 Piglet 1 Piglet 2 Piglet 3 Piglet 2 Piglet 2 Piglet 3 ") ``` --- ```r ### Create a new variable which is the Likert scores as an ordered factor Data$Likert.f = factor(Data$Likert, ordered = TRUE) ``` ```r xtabs( ~ Speaker + Likert.f, data = Data) ``` ``` ## Likert.f ## Speaker 1 2 3 4 5 ## Piglet 1 6 2 1 0 ## Pooh 0 0 1 6 3 ``` ```r XT = xtabs( ~ Speaker + Likert.f, data = Data) prop.table(XT, margin = 1) ``` ``` ## Likert.f ## Speaker 1 2 3 4 5 ## Piglet 0.1 0.6 0.2 0.1 0.0 ## Pooh 0.0 0.0 0.1 0.6 0.3 ``` --- ```r library(lattice) histogram(~ Likert.f | Speaker, data=Data, layout=c(1,2) # columns and rows of individual plots ) ``` <!-- --> --- - Summarize data of Likert scores ```r library(FSA) ``` ``` ## ## FSA v0.9.3. See citation('FSA') if used in publication. ## ## Run fishR() for related website and fishR('IFAR') for related book. ``` ```r Summarize(Likert ~ Speaker, data=Data, digits=3) ``` ``` ## Speaker n mean sd min Q1 median Q3 max ## 1 Piglet 10 2.3 0.823 1 2 2 2.75 4 ## 2 Pooh 10 4.2 0.632 3 4 4 4.75 5 ``` -- ```r wilcox.test(Likert ~ Speaker, data=Data) ``` ``` ## Warning in wilcox.test.default(x = c(2L, 4L, 2L, 2L, 1L, 2L, 3L, 2L, 2L, : ## cannot compute exact p-value with ties ``` ``` ## ## Wilcoxon rank sum test with continuity correction ## ## data: Likert by Speaker ## W = 5, p-value = 0.0004713 ## alternative hypothesis: true location shift is not equal to 0 ``` --- - Mann-Whitney test can be conducted by exact test or Monte Carlo simulation (coin and exactRankTests packages) ```r library(coin) ``` ``` ## Loading required package: survival ``` ```r wilcox_test(Likert ~ Speaker, data=Data, distribution = "exact") ``` ``` ## ## Exact Wilcoxon-Mann-Whitney Test ## ## data: Likert by Speaker (Piglet, Pooh) ## Z = -3.5358, p-value = 0.0002382 ## alternative hypothesis: true mu is not equal to 0 ``` ```r wilcox_test(Likert ~ Speaker, data=Data, distribution = "approximate") ``` ``` ## ## Approximative Wilcoxon-Mann-Whitney Test ## ## data: Likert by Speaker (Piglet, Pooh) ## Z = -3.5358, p-value = 2e-04 ## alternative hypothesis: true mu is not equal to 0 ``` --- ```r library(exactRankTests) ``` ``` ## Package 'exactRankTests' is no longer under development. ## Please consider using package 'coin' instead. ``` ``` ## ## Attaching package: 'exactRankTests' ``` ``` ## The following objects are masked from 'package:coin': ## ## dperm, pperm, qperm, rperm ``` ```r wilcox.exact(Likert ~ Speaker, data=Data, exact=TRUE) ``` ``` ## ## Exact Wilcoxon rank sum test ## ## data: Likert by Speaker ## W = 5, p-value = 0.0002382 ## alternative hypothesis: true mu is not equal to 0 ``` --- ## Effect size - Statistics of effect size for the Mann–Whitney test report the degree to which one group has data with higher ranks than the other group. They are related to the probability that a value from one group will be greater than a value from the other group. Unlike p-values, they are not affected by sample size. -- - **Vargha and Delaney’s** A is relatively easy to understand. It reports the probability that a value from one group will be greater than a value from the other group. A value of 0.50 indicates that the two groups are stochastically equal. A value of 1 indicates that the first group shows complete stochastic domination over the other group, and a value of 0 indicates the complete stochastic domination by the second group. -- - **Cliff’s delta** is linearly related to Vargha and Delaney’s A. It ranges from –1 to 1, with 0 indicating stochastic equality of the two groups. 1 indicates that one group shows complete stochastic dominance over the other group, and a value of –1 indicates the complete stochastic domination of the other group. Its absolute value will be numerically equal to Freeman’s theta. --- ## Effect size statistics Effect size statistics for traditional nonparametric tests include Cliff’s delta and Vargha and Delaney’s A for Mann–Whitney, and epsilon-squared and Freeman’s “coefficient of determination” (Freeman’s theta) (Freeman, 1965) for Kruskal–Wallis. Rank biserial correlation is appropriate for Mann–Whitney and the paired signed-rank test. Kendall’s W can be used for Friedman’s test. A couple of accessible resources on effect sizes for these tests are Tomczak and Tomczak (2014) and King and Rosopa (2010). Some effect size statistics included here determine the degree to which one group has data with higher ranks than other groups. They tend to vary from 0 (groups have data that are stochastically equal) to 1 (one group, the first, stochastically dominates) or –1 (the other, second, group stochastically dominates). They are related to the probability that a value from one group will be greater than a value from another group. As rank-based measures, these effect size statistics do not indicate the difference in absolute values between groups. That is, if you were to replace the 5’s in the second example below with 100’s, the value of the effect size statistics would not change, because in either case the 5’s or 100’s are the highest-ranked numbers. For a practical interpretation of results, it is usually important to consider the absolute values of data such as with descriptive statistics. --- # References - psych package (http://personality-project.org/r/psych/) -- - CRAN Task View: Teaching Statistics (https://cran.r-project.org/web/views/TeachingStatistics.html) -- - Hothorn T, Hornik K, van de Wiel MA, Zeileis A (2008). “Implementing a class of permutation tests: The coin package.” _Journal of Statistical Software_, *28*(8), 1-23. doi: 10.18637/jss.v028.i08 (URL: https://doi.org/10.18637/jss.v028.i08). -- - Salvatore Mangiafico (2022). rcompanion: Functions to Support Extension Education Program Evaluation. R package version 2.4.15. https://CRAN.R-project.org/package=rcompanion -- - Navarro, D. (2019). Learning statistics with R: A tutorial for psychology students and other beginners. University of New South Wales: Australia. --- class: center, middle # Thanks! Slides created via the R package [**xaringan**](https://github.com/yihui/xaringan). The chakra comes from [remark.js](https://remarkjs.com), [**knitr**](https://yihui.org/knitr/), and [R Markdown](https://rmarkdown.rstudio.com).